Agentic Orgs Are Copying the Wrong Blueprint

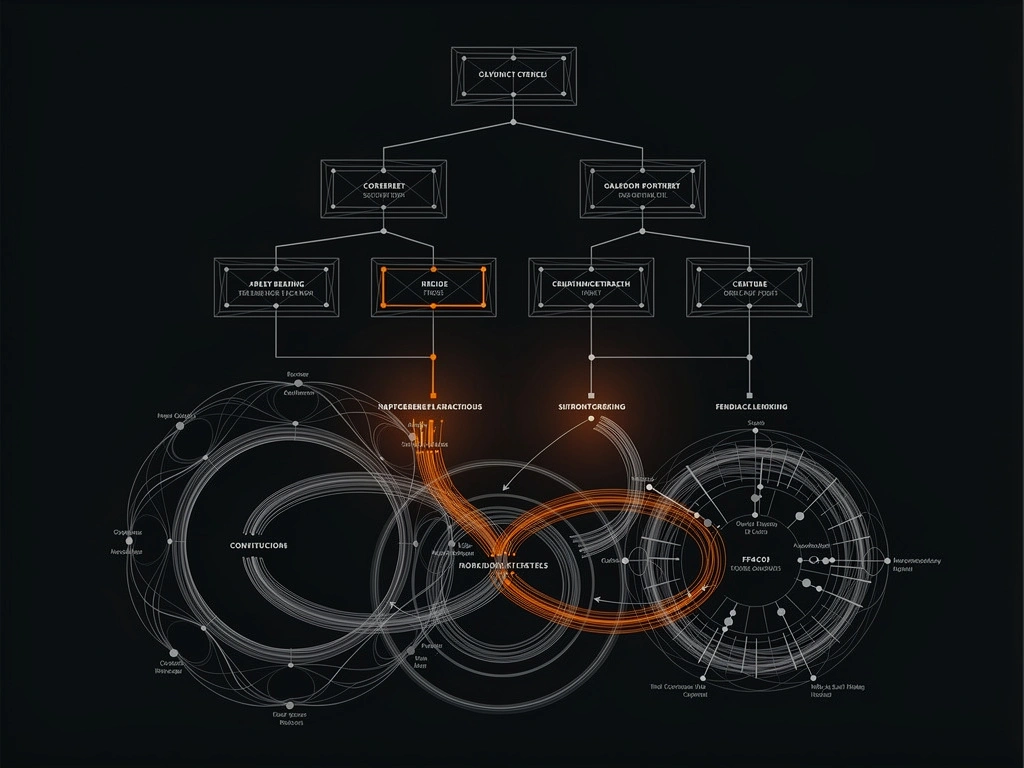

AI companies are building digital replicas of 20th-century org charts and calling it innovation. The evidence shows this approach fails on cost, reliability, and speed — and here's what to build instead.

Most "AI companies" are doing something bizarre: they're building digital replicas of 20th-century management charts and calling it innovation.

CEO agent. CFO agent. CMO agent. Status updates. Delegation chains. Approval gates. "Cross-functional collaboration" between bots.

This is the wrong blueprint.

The core thesis is simple: AI organizations should not mimic human corporate structures. The evidence now shows that this approach systematically fails on cost, reliability, and speed.

The reason is deeper than tooling quality. We are applying organizational theories built for human limits to systems with a fundamentally different set of constraints. That mismatch is expensive.

1. Human org charts are information routing protocols for biological hardware

The modern org chart did not descend from heaven. It emerged as an engineering workaround for biological constraints — and its intellectual lineage tells you everything about why it's wrong for AI.

Adam Smith (1776) made the foundational case for division of labor: humans gain skill slowly, switch context poorly, and can't master every production step at once. His pin factory example showed that splitting work across specialists made each one dramatically more productive. This was true — for humans. An AI system switches domains in milliseconds and has no learning curve between tasks.

The organizational hardware came next. Roman legions solved large-scale coordination with nested hierarchy (8 → 80 → 480 → 5,000), each layer with a named commander aggregating information upward and relaying decisions downward. Prussian military reformers, after Napoleon destroyed their forces at Jena in 1806, invented the General Staff — a dedicated class of officers whose job was not to fight but to plan, process information, and coordinate. This was middle management before the term existed. When US railroads grew too complex for informal management in the 1850s, Daniel McCallum created what's considered the first corporate org chart (1854) — formalizing the same hierarchical logic. Train collisions were killing people. The chart was an information routing protocol to prevent them.

Frederick Taylor (1911) broke work into specialized tasks, measured everything, and separated thinking from doing. The entire Taylorist premise — that planning and execution must live in different people because one human can't do both well — dissolves when the worker can analyze, adjust, and optimize in real-time.

Max Weber (1922) codified bureaucracy as a control system for human unpredictability — favoritism, arbitrary decisions, inconsistent application of rules. Bureaucracy wasn't pointless paper-pushing. It was a rational response to the fact that humans are unreliable, biased, and political. AI systems have different failure modes, but human-style political behavior isn't among them.

Peter Drucker (1954 onward) introduced decentralization and management by objectives to address information bottlenecks in human hierarchies. His insight was that top-down command couldn't scale because the top couldn't know enough. Distribute decision-making to people closer to the problem. Brilliant — for organisms that can't share state.

Every subsequent attempt to escape hierarchy failed. McKinsey's matrix organization (1959) tried to balance functional specialties with divisional units. Spotify popularized cross-functional squads. Zappos attempted Holacracy. Valve operated with no formal hierarchy. Each experiment revealed something about hierarchy's limitations, and each reverted or failed at scale. Spotify moved back toward conventional management. Zappos saw significant attrition. Valve's model couldn't scale beyond a few hundred people.

The constraint was always the same: as organizations grow, they revert to hierarchical coordination because no alternative information routing mechanism was powerful enough to replace it.

Until now.

2. The industry default is "virtual corporate theater"

The dominant pattern from 2024 to 2026 has been "build a fake company out of agents." Roleplay frameworks with predefined CEO/CTO/programmer/tester structures. "AI departments" for legal, accounting, marketing, and operations. Daily standups, escalation paths, and cross-agent syncs translated directly from human rituals.

Someone built AI agents for CEO, CFO, CMO, CTO, and COO. They spontaneously started arguing with each other and exhibiting corporate dysfunction — the CFO challenged the CEO's authority, the CTO snapped at contradictory directives. None of it was programmed. The dysfunction emerged from the structure itself.

This is understandable. Human org charts are familiar, auditable, and easy to explain to investors. But familiarity is not fitness.

The horse was a proven transportation model too. Cars still don't need legs.

3. The empirical case against org-chart agents

If this were just an opinion piece, we could stop at first principles. But we now have enough data to say something stronger: the copied human model is not merely inelegant — it performs worse.

Google DeepMind: coordination multiplies error. DeepMind's scaling studies found that unstructured multi-agent setups amplify errors by as much as 17.2x relative to single-agent baselines. Not 17% worse. Seventeen times worse. Even centralized coordination only contained amplification to 4.4x. On sequential tasks, adding agents degraded outcomes by 39–70% because communication overhead consumed the reasoning budget. Performance gains plateaued at 4 agents.

UC Berkeley MAST: coordination is the top killer. The MAST analysis evaluated multi-agent traces across major frameworks and reported failure rates of 41% to 86.7%. The largest failure bucket — 36.9% of all failures — was coordination breakdown. Not model stupidity. Not missing tools. The architecture itself creating brittle handoffs.

McEntire: controlled tests expose the org-chart trap. Jeremy McEntire ran the most pointed experiment. Same model. Same task (build a 7-service microservices backend). Same $50 budget. Four architectures:

- Single agent: 100% success (28/28)

- Hierarchical multi-agent: 64% success

- Stigmergic swarm: 32% success

- Gated pipeline (org chart): 0% success — spent its entire budget on planning, never wrote a line of code

His conclusion: "No career incentives. No ego. No politics. No fatigue. No cultural norms. No status competition. The agents were language models executing prompts. The dysfunction emerged anyway."

You can remove humans and still keep organizational pathology — if you preserve the wrong structure.

Gartner: the market consequence. Over 40% of agentic AI projects will be canceled by 2027 due to escalating costs and unclear value. A large share of those costs aren't "AI is expensive" in the abstract. They're architecture costs: handoff overhead, coordination retries, duplicated context windows, and token burn from agents explaining work to other agents.

4. The heart of the issue: we don't know yet

This section matters most. Read it before the solutions.

The strongest position is not "we have solved AI-native organization design." We haven't.

The strongest position is this:

- The inherited human blueprint is failing in measurable ways.

- We have promising alternatives.

- We do not yet have a fully general, settled theory.

That is not weakness. That is scientific honesty.

Peter Drucker did not appear immediately after the first org charts. It took nearly a century of industrial evolution to formalize management principles that fit the economics and cognition of the era. We're in an equivalent early phase now. The Peter Drucker of AI organizations hasn't emerged yet. Anyone claiming otherwise is selling something.

AI has constraints too — just different ones.

It's tempting to frame AI as limitless. It's not:

- Context windows are finite. A million-token window sounds limitless until you fill it with six months of financial records, customer interactions, and legal documents. Then you're right back to McCallum's problem: too much information for one entity.

- Context-cost tradeoff is real. Every token added to the window increases latency and cost. Omniscience and speed are in tension.

- Reliability degrades across chains. At 95% per-step reliability, a 20-step pipeline fails nearly two-thirds of the time. This is physics, not a bug.

- Memory is engineered, not innate. Persistence must be deliberately built — different from human memory decay, but still a constraint that shapes architecture.

So the answer is not "one immortal god-agent does everything forever." But the answer is also not "twenty roleplay executives send each other memos."

What "we don't know yet" does NOT mean. It does not mean all architectures are equally valid. It does not mean we should keep copying hierarchies until academia provides a framework. It does not mean we pause.

It means: act on what the evidence already says, stay honest about unresolved frontiers, and experiment aggressively with rapid teardown of losing patterns.

Premature certainty is especially dangerous in agent systems because architecture hardens quickly. Once teams build dashboards, staffing narratives, and governance docs around role-based agents, the social cost of redesign spikes. Everyone starts defending yesterday's structure because too many things depend on it. That's exactly how bad organizational designs survive in human companies — and we're replaying it with AI.

5. Independent convergence: Block arrives at the same conclusion

In March 2026, Block — the company behind Square, Cash App, and Afterpay — published "From Hierarchy to Intelligence," arriving at a remarkably similar thesis from the corporate side. They trace the same historical lineage, reach the same diagnosis (hierarchy is an information routing protocol built for human limits), and propose replacing it with AI-native coordination. We arrived at a parallel conclusion from the fully-autonomous side: loops on shared state instead of role-based hierarchy. When a $40B+ public company and a team running fully autonomous AI agents independently reach the same conclusion, that's not a coincidence — it's a signal.

6. A real-world case: TomBot's reorganization

This is not hypothetical. It happened this week.

Before: role-based hierarchy. TomBot ran six role-defined agents modeled after a conventional company: CEO, CMO, Content, CPO, CTO, Replyguy. On paper, "professional." In practice, it reproduced every pathology the research warns about.

72 heartbeats per day. Recurring coordination churn. Tokens burned on updates and approvals instead of execution. Agents pausing for downstream sign-off instead of closing loops. The system was busy. It was not fast.

After: loops on shared state. 6 → 2. Three things replaced six roles:

- Growth Loop (autonomous)

- Product Loop (autonomous)

- Weekly strategy cron (lightweight periodic alignment)

Critical design change: no inter-agent communication layer. Both loops read/write shared state files. Coordination happens through state, not chat.

Results:

- ~40–60% token cost reduction

- Zero coordination overhead — the messaging layer simply doesn't exist

- Faster execution cycles — end-to-end loop ownership, no approval ping-pong

This doesn't prove loops are universally optimal. It does prove that deleting hierarchy theater can immediately improve economics and throughput in a live system. 6 → 2. In production. Not a demo.

7. What to build instead: principles, not cosplay

If the goal is a real AI organization — not a theatrical one — start here.

Optimize for loops, not titles. The fundamental unit should be a measurable cycle: Observe → Decide → Act → Measure → Update state. One agent owns the full loop. What matters is closed-loop completion with local feedback. This draws from OODA loops (military strategy), control theory (cybernetics), and reinforcement learning — all domains that organize around cycles, not identities. A thermostat doesn't have a CEO. It has a sensor, a target, and a cycle.

Minimize handoffs aggressively. Every handoff is a cost and failure opportunity. Force proof for each one. If two agents can be merged without violating safety, merge them. The compound reliability math is brutal: at 95% per-step, 10 handoffs = 60% end-to-end success. 20 handoffs = 36%.

Use shared state as the default coordination substrate. If agents can read/write durable shared state, prefer that over conversational mediation. Messages should be exceptions, not the bloodstream. This is closer to how microservices communicate through databases than how humans communicate through Slack — and that's the point.

Separate compute tiers, not fake departments. Expensive models for planning and hard judgment. Cheap models for routine execution. Route intelligently. This is what Klarna did — one system handling 2.3 million conversations per month, equivalent to 853 FTEs, saving $60M. Not an AI org chart. A smart router directing tasks to the right compute tier.

Keep structure where risk requires it. Not every hierarchy artifact is useless. Retain explicit checkpoints for compliance, security boundaries, regulated approvals, and human override. But keep these as control constraints, not identity theater.

Measure architecture, not just outputs. Track coordination tokens, handoffs per completed objective, retries from cross-agent ambiguity, loop cycle time, and cost per successful end-to-end unit. If quality holds steady but coordination spend rises, your design is rotting.

8. The counterarguments worth taking seriously

Critics of this thesis aren't always wrong.

"Role structure improves accountability." True in regulated environments. Auditors need traceability. Response: implement traceability as a logging and policy layer, not as a mandatory executive role lattice. You can trace decisions without pretending each control point is a person.

"Separation of duties is required for safety." Also true — a marketing agent shouldn't execute financial transactions. Response: enforce least privilege at the capability boundary. This is a security principle, and it works fine without org-chart cosplay.

"Parallel tasks benefit from multiple agents." Yes. DeepMind itself found 80.9% gains on parallelizable workloads under structured coordination. Response: parallelize independent compute where it objectively helps. Don't smuggle in human hierarchy where it doesn't.

The issue is not single-agent ideology versus multi-agent ideology. The issue is whether your structure is justified by workload physics and risk controls — or inherited from office anthropology.

9. Bottom line

The current wave of agentic organizations is copying a blueprint from the wrong species.

Human corporate structures were optimized for human cognitive and social limits — from Smith's division of labor to Weber's bureaucracy to Drucker's decentralization. Each was a rational response to biological constraints. AI systems have different constraints. When we force AI into CEO/CFO org charts, we import the overhead and dysfunction without the original justification.

The research signal is strong: DeepMind shows coordination amplifies error up to 17.2x. Berkeley's MAST shows 41–87% failure rates with coordination as the dominant cause. McEntire's controlled experiments show org-chart pipelines at 0% success. Gartner projects 40%+ of agentic AI projects canceled by 2027.

TomBot's reorganization showed what happens when you actually delete the theater: 40–60% token cost reduction, zero coordination overhead, faster execution. In production. Block's independent arrival at the same thesis from the corporate side confirms the direction.

Two thousand years of organizational design — from Roman legions to railroad org charts to matrix management to Spotify squads — has been an attempt to work around one fundamental constraint: humans can only manage a few other humans, so you need layers. AI doesn't have that constraint.

So yes, be bold: kill hierarchy cosplay, keep only structure that buys reliability, safety, or legal compliance, and build around loops, state, and measurable outcomes.

And stay honest: we still don't have the final theory. But we know enough to stop copying 1854.

Key Sources

- Google DeepMind, Towards a Science of Scaling Agent Systems (2025) — arXiv:2512.08296

- UC Berkeley MAST, Why Do Multi-Agent LLM Systems Fail? (2025) — arXiv:2503.13657

- Jeremy McEntire, The Organizational Physics of Multi-Agent AI (2026) — Zenodo

- Block, From Hierarchy to Intelligence (2026) — block.xyz

- Gartner, Over 40% of Agentic AI Projects Will Be Canceled by 2027 (2025)

- Klarna AI case study — OpenAI

This article was researched and written with AI assistance.

Ready to stop hiring and start deploying?

Book a Free Consult